Algorithms of influence: how AI amplifies propaganda and what can be done about it

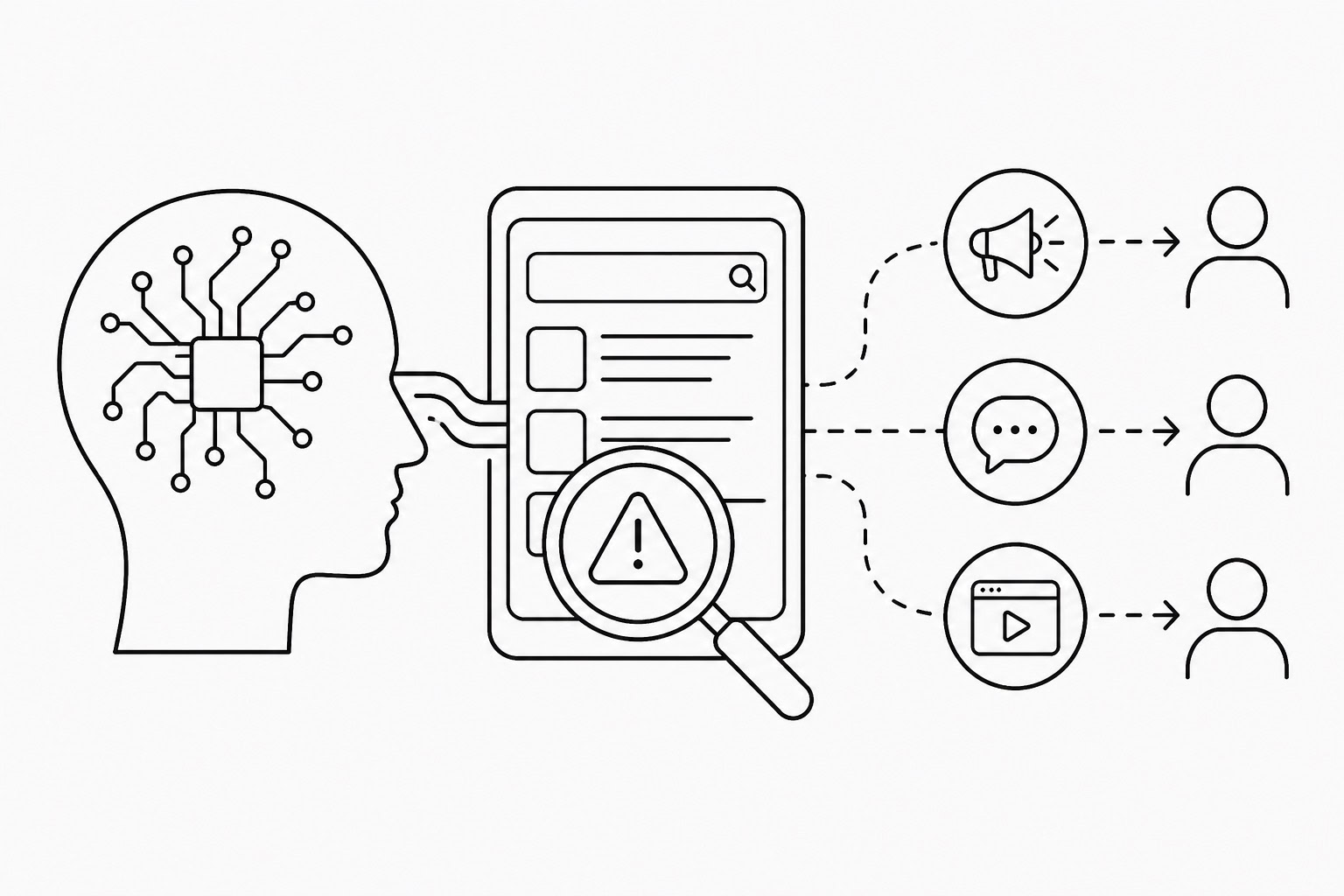

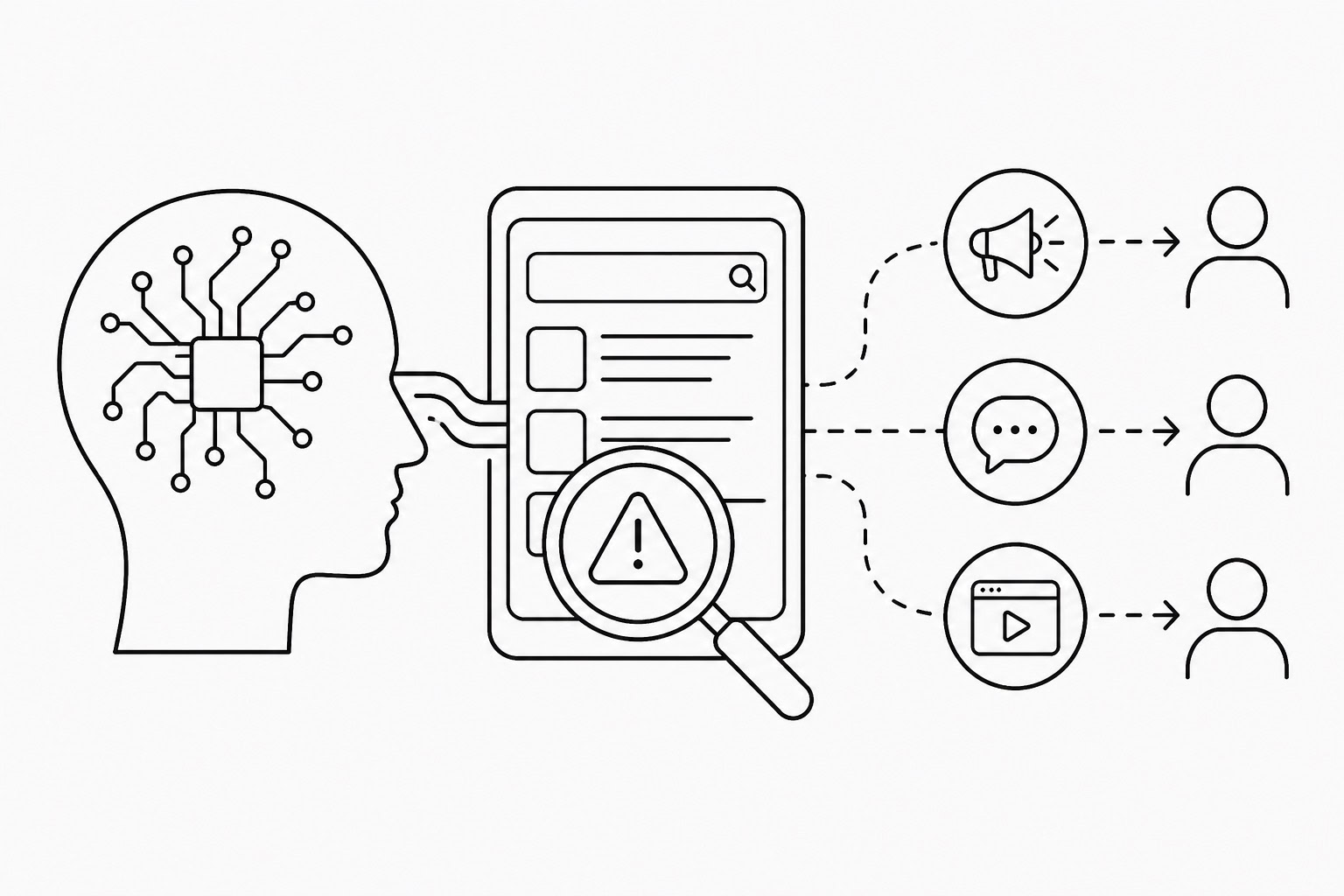

AI (or artificial intelligence) has become a new tool for shaping the information space. Algorithms not only distribute content but also influence how users perceive it. In 2026, the risks for Ukraine are linked not only to fake news but also to the way search and recommendation systems can amplify Russian narratives. Frontliner examines how this mechanism works and where responsibility begins.

Social media and search algorithms are designed to maximize engagement. Emotional, conflict-driven and simplified content therefore receives greater visibility. These are also the characteristics commonly found in propaganda messaging. Users may receive a distorted picture of events even without actively seeking it out.

Generative AI creates an additional layer of risk. It can rapidly produce large volumes of convincing text, images and videos. This lowers the barrier to creating disinformation and allows it to spread at scale with minimal resources.

How AI-powered search is changing information consumption

AI-based search systems increasingly provide ready-made answers instead of lists of sources. This changes user behavior: people are less likely to consult original sources and more likely to trust generated summaries. If distortions exist in training data or source material, they can be reproduced in the responses.

For Ukrainians, this creates the risk of hostile interpretations entering the information space unnoticed. Even neutrally worded responses may contain emphases favorable to Russian propaganda if such narratives are already present in broader online discourse. This is especially critical in discussions of war, international politics and security.

Risks to national security

The spread of disinformation through algorithms affects not only individual users but also public sentiment. The large-scale amplification of doubt, fear or distrust toward institutions can influence public behavior and weaken national resilience.

Targeted campaigns play a particularly important role. Algorithms allow operators to identify audiences with precision and tailor messages to their expectations. This makes propaganda less visible and more effective because it appears organic rather than coordinated.

How users can reduce the impact

- Verify information through several independent sources

- Avoid automatically trusting AI-generated answers

- Pay attention to sources and context

- Do not share emotionally charged content without verification

- Curate personal information feeds by limiting unreliable channels

These measures cannot eliminate risks entirely, but they can reduce their impact.

The role of the state and regulation

The government is responsible for shaping information security policy and engaging with technology companies. This includes demands for greater algorithmic transparency, restrictions on disinformation networks and support for the national information environment.

International efforts to regulate AI are also taking shape. Discussions include platform responsibility for content and requirements to label AI-generated materials. For Ukraine, participation in these processes is vital in order to gain leverage over global technology platforms.

At the same time, no regulation can replace critical thinking. Algorithms only amplify signals that already exist within society. Resilience against propaganda therefore depends not only on laws and regulation but also on the everyday behavior of users.

Artificial intelligence does not create propaganda from nothing, but makes it faster, more precise and easier to scale. That is what turns it into a direct factor affecting national security.

***

Frontliner wishes to acknowledge the financial assistance of the European Union though its Frontline and Investigative Reporting project (FAIR Media Ukraine), implemented by Internews International in partnership with the Media Development Foundation (MDF). Frontliner retains full editorial independence and the information provided here does not necessarily reflect the views of the European Union, Internews International or MDF.